AI TL;DR

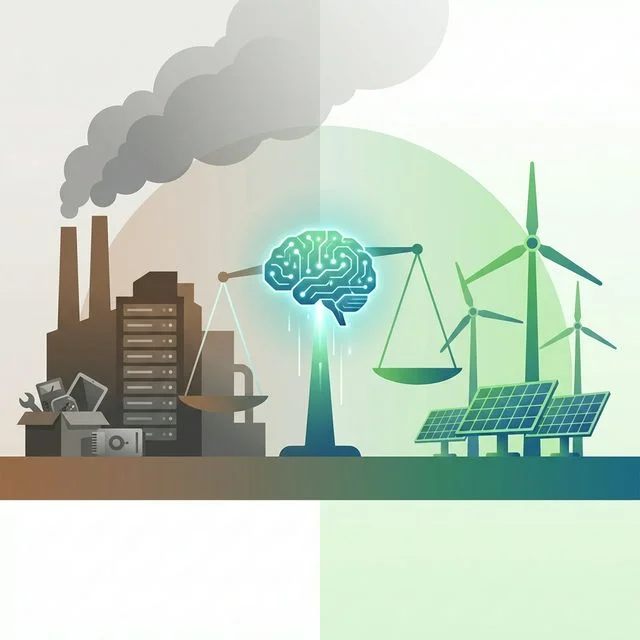

AI data centers now consume more electricity than some countries. As the industry races to scale, the environmental cost is becoming impossible to ignore.

The AI Sustainability Crisis: Can We Power the AI Revolution Without Burning the Planet?

Here's an uncomfortable truth that the AI industry doesn't like to talk about: every time you ask ChatGPT a question, it uses roughly 10 times more energy than a Google search. And with global AI spending projected to hit $2.5 trillion in 2026, the energy bill is becoming enormous.

The Scale of the Problem

AI's Energy Appetite

| Metric | Scale |

|---|---|

| Global data center electricity | ~4% of world electricity (and growing) |

| Single GPT-5 query | ~10x energy of a Google search |

| Training GPT-5 | Estimated electricity of 1,000+ US homes for a year |

| AI data center water usage | Billions of liters annually for cooling |

| Projected AI energy demand by 2030 | Could double current data center consumption |

The Infrastructure Explosion

Consider the investments announced just at the India AI Summit 2026:

- Adani: $100B data center platform

- Reliance: $110B AI infrastructure

- Microsoft: $17.5B India expansion

- Google: $15B AI hub

Every dollar of infrastructure investment translates to massive, ongoing energy consumption.

Why AI Is So Energy-Hungry

Training vs. Inference

Training: Building the model. This is the headline number—training a frontier model can consume the annual electricity of a small city. But training happens once (or a few times).

Inference: Running the model for users. This is where the real scale sits. Billions of queries per day, 24/7/365. Inference energy costs are growing far faster than training costs.

The Scaling Dilemma

The AI industry is caught in a loop:

- Bigger models → better performance → more users

- More users → more inference → more energy

- Competition → even bigger models → repeat

There's no sign this cycle is slowing. Every company is racing to build larger, more capable models.

What the Industry Is Doing

Renewable Energy Commitments

| Company | Renewable Energy Goal |

|---|---|

| 100% renewable by 2030 | |

| Microsoft | 100% renewable by 2025 (claimed) |

| Amazon | Net zero by 2040 |

| Adani | Data centers on renewable energy |

Efficiency Improvements

- Model optimization: Techniques like quantization, distillation, and pruning reduce model size and energy per query

- Mixture of Experts: MoE architectures (like MiniMax) only activate relevant model portions, reducing compute

- Hardware advances: Specialized AI chips (TPUs, custom ASICs) are more energy-efficient than general GPUs

- Inference optimization: Caching, batching, and intelligent routing reduce unnecessary computation

Nuclear Energy Revival

Several AI companies are investing in nuclear power:

- Microsoft signed a deal to restart Three Mile Island

- Google invested in next-generation nuclear

- Amazon exploring small modular reactors (SMRs)

- OpenAI's Sam Altman invested in Helion (fusion energy)

The Uncomfortable Questions

Does Better AI Justify the Energy Cost?

The case for: AI can optimize energy grids, accelerate climate research, improve agricultural efficiency, and discover new materials for clean energy. The energy spent on AI could pay for itself through environmental benefits.

The case against: Most AI usage isn't solving climate change—it's generating social media content, writing emails, and creating images. The environmental cost of these convenience applications is harder to justify.

Who Pays the Environmental Cost?

Data centers are increasingly being built in regions with:

- Cheap electricity (which often means fossil fuels)

- Abundant water (in areas facing water stress)

- Favorable regulations (which may mean weak environmental protections)

The communities hosting data centers bear the local environmental burden—noise, water consumption, grid strain—while the benefits accrue globally.

Is "Green AI" Just Greenwashing?

When a company says their data centers run on "100% renewable energy," it often means:

- They buy renewable energy certificates (RECs)

- The actual grid power may still come from fossil fuels

- The certificates offset carbon elsewhere but don't reduce local impact

- Real-time matching (using renewable energy at the moment of consumption) is rare

What Needs to Change

Industry-Level

- Mandatory energy reporting: AI companies should disclose per-query energy consumption

- Real-time renewable matching: Not just buying credits, but actually using clean energy

- Efficiency standards: Industry benchmarks for energy per useful output

- Water usage transparency: Cooling water consumption should be public

Technology-Level

- Smaller, smarter models: Not every task needs a 1-trillion-parameter model

- Edge computing: Running smaller models on devices reduces data center load

- Model routing: Send simple queries to efficient models, complex ones to capable models

- Carbon-aware computing: Schedule non-urgent tasks during low-carbon grid periods

User-Level

- Be intentional: Don't use AI for tasks you can do just as easily

- Choose efficient models: Use smaller models when appropriate

- Batch requests: Multiple questions in one conversation vs. separate sessions

- Support transparency: Prefer providers who disclose environmental impact

The Bottom Line

The AI industry has a sustainability problem that's growing exponentially. The good news: the technical solutions exist—efficient models, renewable energy, smart infrastructure. The bad news: market incentives currently favor capability over efficiency.

This is a solvable problem, but only if the industry, regulators, and users take it seriously before the environmental costs compound beyond easy repair. The choices made in 2026 will shape AI's environmental legacy for decades.