AI TL;DR

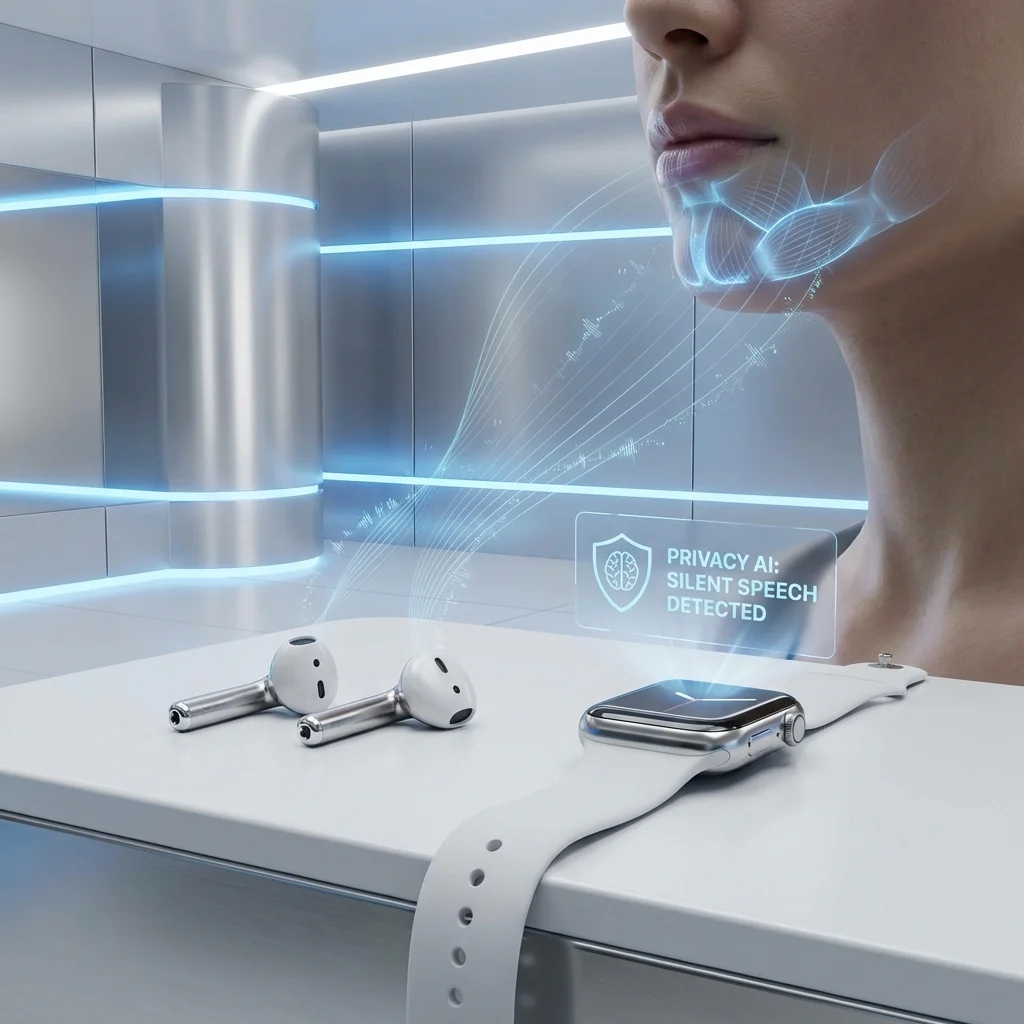

Apple's second-largest acquisition ever brings 'silent speech' technology to Siri. Here's what Q.ai's audio AI means for AirPods, Apple Watch, Vision Pro, and the rumored Apple AI Pin.

Apple Acquires Q.ai for $2B: Silent Speech AI and the Future of Wearables

In late January 2026, Apple confirmed its acquisition of Q.ai, an Israeli startup specializing in "silent speech" audio AI, for approximately $2 billion. This marks Apple's second-largest acquisition in history, behind only the 2014 Beats Electronics purchase.

What Is Q.ai?

Founded in 2022 in Tel Aviv, Q.ai develops AI technology that can:

- Interpret whispered speech at volumes inaudible to bystanders

- Analyze micro facial movements to understand intended speech

- Enhance audio in extremely loud environments

- Read emotional intent from subtle vocal patterns

┌────────────────────────────────────────────────────────────────────┐

│ Q.AI TECHNOLOGY STACK │

├────────────────────────────────────────────────────────────────────┤

│ │

│ ┌──────────────────────────────────────────────────────────┐ │

│ │ INPUT SOURCES │ │

│ ├────────────────┬─────────────────┬───────────────────────┤ │

│ │ Microphone │ Bone Conduction │ Camera (optional) │ │

│ │ (whispers) │ (sub-vocal) │ (lip reading) │ │

│ └───────┬────────┴────────┬─────────┴──────────┬───────────┘ │

│ │ │ │ │

│ ▼ ▼ ▼ │

│ ┌────────────────────────────────────────────────────────────┐ │

│ │ MULTIMODAL SPEECH UNDERSTANDING │ │

│ │ │ │

│ │ • Ultra-quiet speech recognition (20dB whispers) │ │

│ │ • Facial muscle pattern analysis │ │

│ │ • Bone conduction vibration interpretation │ │

│ │ • Environmental noise cancellation │ │

│ │ │ │

│ └────────────────────────────────────────────────────────────┘ │

│ │ │

│ ▼ │

│ ┌────────────────────────────────────────────────────────────┐ │

│ │ OUTPUT │ │

│ │ • Transcribed text │ │

│ │ • Siri command execution │ │

│ │ • Emotion/intent metadata │ │

│ └────────────────────────────────────────────────────────────┘ │

│ │

└────────────────────────────────────────────────────────────────────┘

The core innovation: communicating with AI assistants discreetly, without anyone around you hearing your commands.

The Acquisition Details

| Aspect | Details |

|---|---|

| Price | ~$2 billion |

| Team | 85 employees join Apple |

| CEO | Aviad Maizels (joins Apple AI team) |

| IP | 47 patents in audio AI |

| Timeline | Deal closed January 2026 |

The PrimeSense Connection

Q.ai's CEO Aviad Maizels has history with Apple—he was a co-founder of PrimeSense, the Israeli startup Apple acquired in 2013 for $360 million. PrimeSense's 3D sensing technology became the foundation for Face ID.

This track record likely accelerated Apple's confidence in the acquisition.

What This Means for Apple Products

AirPods Pro 3 (Expected 2026-2027)

The most immediate application:

| Feature | Current | With Q.ai |

|---|---|---|

| Voice Commands | Normal speech | Whispers work |

| Noise Handling | Good ANC | Enhanced in extreme noise |

| Emotion Detection | None | Stress/mood awareness |

| Privacy | Others hear commands | Silent commands |

Imagine asking Siri to send a message on a crowded train—without anyone hearing you.

Apple Watch Ultra 3

With bone conduction and facial muscle sensors:

- Sub-vocal commands: Control apps without speaking aloud

- Health insights: Detect stress from vocal patterns

- Emergency features: Silent 911 calls in danger situations

Vision Pro 2

Spatial computing with silent interaction:

- Mixed reality without isolation: Interact with virtual content while appearing engaged with reality

- Accessibility: Users with speech impairments can command via facial movements

- Social acceptability: Reduce the awkwardness of talking to air

The Apple AI Pin (Rumored for 2027)

Q.ai's technology seems tailor-made for Apple's rumored wearable AI pin:

| Apple AI Pin Rumor | Q.ai Capability | Fit |

|---|---|---|

| Always-listening assistant | Silent speech input | ✅ Perfect |

| Discrete form factor | Whispered commands | ✅ Perfect |

| Camera for context | Lip reading backup | ✅ Perfect |

| All-day battery | Efficient processing | 🔄 Likely |

"Apple has bought the exact technology needed to make a wearable AI assistant that doesn't make you look like you're talking to yourself in public." — Mark Gurman, Bloomberg

Technical Deep Dive

Sub-Vocal Speech Recognition

Q.ai's core technology detects speech before it becomes audible:

- Muscle Movement: Electrodes detect tiny movements in throat/jaw muscles

- Bone Conduction: Captures vibrations traveling through skull

- Air Conduction: Ultra-sensitive microphones for whispers

- Lip Reading: Camera-based backup for challenging environments

# Conceptual multimodal fusion

class SilentSpeechRecognizer:

def recognize(self, inputs):

# Multiple input modalities

muscle_signal = self.emg_encoder(inputs.muscle_data)

bone_signal = self.bone_encoder(inputs.bone_conduction)

air_signal = self.audio_encoder(inputs.whisper_audio)

lip_signal = self.vision_encoder(inputs.lip_video)

# Fusion with confidence weighting

fused = self.fusion_network(

muscle_signal,

bone_signal,

air_signal,

lip_signal,

weights=self.compute_confidence(inputs)

)

return self.decoder(fused)

Accuracy at Different Volumes

| Speech Volume | Traditional ASR | Q.ai Technology |

|---|---|---|

| Normal (60dB) | 98% | 99% |

| Quiet (40dB) | 85% | 97% |

| Whisper (30dB) | 40% | 94% |

| Sub-vocal (20dB) | Fails | 88% |

On-Device Processing

Critically, Q.ai's models run entirely on-device:

- No cloud round-trip required

- Privacy by design

- Works offline

- Low latency (<100ms)

This aligns perfectly with Apple's privacy-first approach.

Competitive Implications

For Siri

Siri has historically lagged behind competitors in capability. Silent speech changes the competitive dynamic:

| Advantage | Impact |

|---|---|

| Privacy | Users more willing to use voice AI in public |

| Discretion | No social awkwardness |

| Accessibility | New user groups (speech impairments) |

| Novel Interactions | Commands impossible with normal speech |

For Competitors

| Competitor | Challenge |

|---|---|

| Google Assistant | Needs similar acquisition or development |

| Amazon Alexa | Wearables aren't core competency |

| OpenAI | No hardware platform |

| Humane (AI Pin) | Apple entering their market with superior tech |

The Humane AI Pin—which requires speaking aloud—suddenly looks less compelling if Apple's version accepts whispers.

Timeline Expectations

| Milestone | Expected Date |

|---|---|

| Q.ai team integration | Q1-Q2 2026 |

| Developer beta (Siri improvements) | Q3 2026 |

| AirPods Pro 3 with silent speech | Q4 2026 or Q1 2027 |

| Apple Watch with sub-vocal | 2027 |

| Apple AI Pin (if real) | 2027-2028 |

Broader Industry Impact

Voice AI Becomes More Viable

The awkwardness of voice assistants has limited adoption:

"I don't use Siri in public because I don't want to look like I'm talking to myself." — Common user feedback

Silent speech eliminates this barrier, potentially accelerating voice AI adoption across the industry.

Wearables Get Smarter

As computing moves from phones to wearables, new input methods become critical:

| Input Method | Screen | Wearable Fit |

|---|---|---|

| Touch | Required | Limited |

| Voice | ❌ Awkward | With silent speech: ✅ |

| Gesture | Limited | Some potential |

| Neural | Future | Far future |

Silent speech may be the key input innovation that makes always-on wearable AI practical.

Conclusion

Apple's $2 billion acquisition of Q.ai is a strategic investment in the post-smartphone future. By enabling discrete, whispered, or even sub-vocal communication with AI assistants, Apple is positioning for a world where:

- Wearables replace phones for many tasks

- AI assistance is ambient and always available

- Privacy and social acceptability are preserved

For users frustrated by Siri's limitations, this acquisition suggests meaningful improvements ahead. For competitors, it's a signal that Apple is serious about the AI wearables race.

Related Reading

Would you use sub-vocal or whispered commands with Siri? Share your thoughts below.